GCPR VMV | 2026

GCPR VMV 2026

GCPR +VMV 2026 Program

This page will collect talk, keynotes, workshops, and other sessions of the program as they are announced.

Invited Talks

Tutorial on Scaling Open-source Vision Foundational Models

Organizers: Shashanka Venkataramanan, Lukas Knobel

Workshop session: Half day, Tuesday afternoon

Vision foundation models trained on massive, proprietary datasets currently dominate the field, creating a significant barrier for the broader research community. This tutorial shifts the narrative, demonstrating how researchers can train vision encoders entirely on open-source datasets to match or even surpass the performance of closed-source giants. We move beyond theoretical assumptions to break down the exact engineering requirements needed to close this gap whether you are working with a massive cluster or a multi-node setup.

Scaling models introduce severe optimization challenges, from loss spikes to representation collapse. We detail how to maintain training stability when moving to a few hundred million parameter model, but we also translate these lessons into practical strategies for smaller-scale training. By monitoring gradients and architectural bottlenecks, researchers can ensure steady convergence and faster training times, regardless of their total FLOPs budget.

The most significant lever for performance isn't just more compute, it’s better data. Moving away from indiscriminate web scraping, we examine how advanced filtering strategies such as semantic deduplication and entropy-based sampling directly dictate a model’s zero-shot transfer capabilities. These techniques allow researchers to achieve superior feature quality with a fraction of the data, making high-performance training accessible to those without petascale storage.

What this tutorial aims to achieve:

- Demonstrate how vision encoders trained exclusively on public data (like DataComp or LAION) can achieve parity with proprietary models across standard benchmarks.

- Provide architectural and hyperparameter strategies to maintain steady convergence when scaling vision foundation models.

- Teach algorithmic data filtering techniques that shift the focus from raw data volume to high-quality, balanced datasets, effectively improving model robustness.

- Bridge the gap between "big lab" infrastructure and academic research, providing a roadmap for resource-efficient foundation model development.

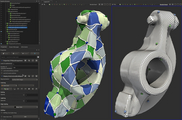

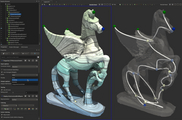

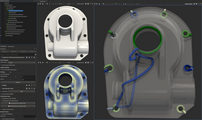

Tutorial on Topological Data Analysis on Surface Meshes

Organizers: Jonas Lukasczyk

Target Audience: Novice

Workshop session: Half day

This tutorial introduces the foundations and practical applications of Topological Data Analysis (TDA) for surface meshes. TDA has emerged as a powerful framework for extracting robust, multi-scale structural features from complex data, making it particularly valuable for visualization, geometry processing, and scientific computing.

In this tutorial, participants will learn how to use the Topology Toolkit (TTK, https://topology-tool-kit.github.io/), a software library that provides a wide range of TDA algorithms for surface meshes, such as topological skeletonization and remeshing, the computation of persistent generators for detecting connectivity, loops, and holes, as well as topology-driven shape matching techniques. TTK is integrated into ParaView, which can be easily installed on Linux, Windows, and MacOS (https://www.paraview.org/download/). The tutorial is designed to be highly interactive, where attendees will engage in guided, hands-on exercises that teach them how to apply TDA methods within their own workflows.

Participants are asked to kindly bring their laptops and install paraview with the TTK-plugin in advance of the tutorial.